October 3, 2012

In Denial about Deny All?

In just a dozen or so years the computer underground has transformed itself from hooliganistic adolescent fun and games (fun for them, not much fun for the victims) to international organized cyber-gangs and sophisticated state-sponsored advanced persistent threat attacks on critical infrastructure. That’s quite a metamorphosis.

Back in the hooliganistic era, for various reasons the cyber-wretches tried to infect as many computers as possible, and it was specifically for defending systems from such massive attacks that traditional antivirus software was designed (and did a pretty good job at). These days, new threats are just the opposite. The cyber-scum know anti-malware technologies inside out, try to be as inconspicuous as possible, and increasingly opt for targeted – pinpointed – attacks. And that’s all quite logical from their business perspective.

So sure, the underground has changed; however, the security paradigm, alas, remains the same: the majority of companies continue to apply technologies designed for mass epidemics – i.e., outdated protection – to tackle modern-day threats. As a result, in the fight against malware companies maintain mostly reactive, defensive positions, and thus are always one step behind the attackers. Since today we’re increasingly up against unknown threats for which no file or behavioral signatures have been developed, antivirus software often simply fails to detect them. At the same time contemporary cyber-slime (not to mention cyber military brass) meticulously check how good their malicious programs are at staying completely hidden from AV. Not good. Very bad.

Such a state of affairs becomes even more paradoxical when you discover that in today’s arsenals of the security industry there do exist sufficient alternative concepts of protection built into products – concepts able to tackle new unknown threats head-on.

I’ll tell you about one such concept today…

Now, in computer security engineering there are two possible default stances a company can take with regard to security: “Default Allow” – where everything (every bit of software) not explicitly forbidden is permitted for installation on computers; and “Default Deny” – where everything not explicitly permitted is forbidden (which I briefly touched upon here).

As you’ll probably be able to guess, these two security stances represent two opposing positions in the balance between usability and security. With Default Allow, all launched applications have a carte-blanche to do whatever they damn-well please on a computer and/or network, and AV here takes on the role of the proverbial Dutch boy – keeping watch over the dyke and, should it spring a leak, frenetically putting his fingers in the holes (with holes of varying sizes (seriousness) appearing regularly).

With Default Deny, it’s just the opposite – applications are by default prevented from being installed unless they’re included on the given company’s list of trusted software. No holes in the dyke – but then probably no excessive volumes of water running through it in the first place.

Besides unknown malware cropping up, companies (their IT departments in particular) have many other headaches connected with Default Allow. One: installation of unproductive software and services (games, communicators, P2P clients… – the number of which depends on the policy of a given organization); two: installation of unverified and therefore potentially dangerous (vulnerable) software via which the cyber-scoundrels can wriggle their way into a corporate network; and three: installation of remote administration software, which allows access to a computer without the permission of the user.

Re the first two headaches things should be fairly clear. Re the third, let me bring some clarity with one of my EK Tech-Explanations!

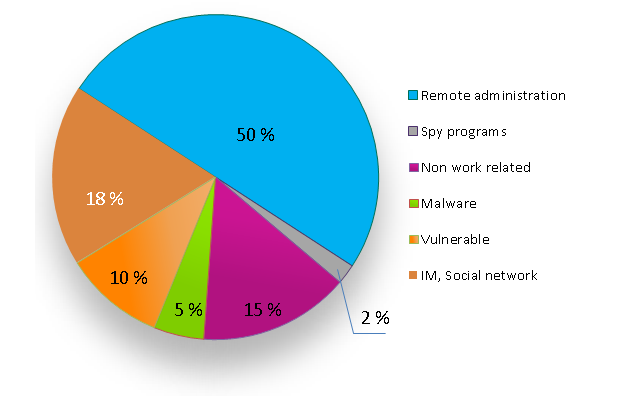

Not long ago we conducted a survey of companies in which we posed the question, “How do employees violate adopted IT-security rules by installing unauthorized applications?” The results we got are given in the pie-chart below. As you can see, half the violations come from remote administration. By this is meant employees or systems administrators installing remote control programs for remote access to internal resources or for accessing computers for diagnostics and/or “repairs”.

The figures speak for themselves: it’s a big problem. Interestingly, the survey wasn’t able to clearly establish when, why, or by whom remote admin programs were installed (employees often replied that former colleagues installed them).

The danger lies in the fact that such programs aren’t blocked by traditional AV. Besides, because such programs are already installed on many PCs, it’s probable that no one takes much notice of them. And even if they are taken notice of – nothing is done about them as it is presumed that they were installed by IT. Such a situation is typical for networks – no matter their size. It turns out that an employee can install for a colleague such a program and it stays unnoticed. Or the employee can gain access to his/her or someone else’s computer from an external network. What is even more dangerous is that this quasi-legitimate loophole can be abused by cyber-scoundrels and ill-intentional former employees just as easily as current employees.

With Default Allow the corporate network becomes an overgrown jungle – a hodge-podge of all kinds of weird and wonderful software, with no one remembering when, why, or by whom it was installed. The only way to prevent such a hodge-podge? A Default Deny policy.

At first glance the idea of Default Deny looks rather simple. But that’s just on paper. In practice it’s a task that’s far from uncomplicated. It’s made up of three main components: (1) a big, well-categorized database of checked legitimate programs; (2) a set of technologies providing maximum automation of the implementation process; and (3) easy-to-use tools for post-implementation management of Default Deny mode.

And now we’re gonna take a commercial break. In other words, I’m gonna tell you about how the above three components do their dance in our corporate products.

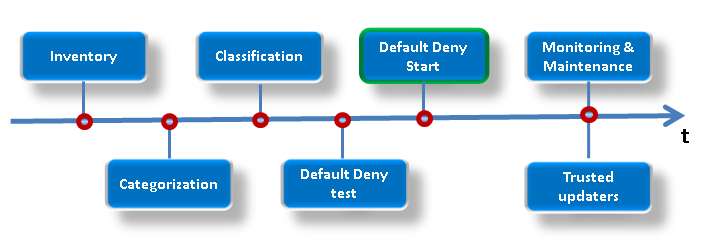

Overall, the process looks like this:

The first stage is automatic inventorization – the system collecting information on all applications installed on personal computers and network resources. Then the located applications are all categorized (also done automatically). This is helped out by our cloud database of verified software (whitelisting), which contains info on more than 530 million safe files, split into 96 categories; it’s this that helps IT decide what’s good and what’s bad. Already at this stage there’s formed a good picture of what’s really going on on the network – and who’s being naughty.

Next – classification: labeling the located software “Trusted” or “Forbidden” as per the security policy, while taking into account the particular “rights” of certain employees and their groups. For example, the category “multimedia” may be accessible only to the marketing and PR departments but inaccessible to all other ones. It’s also possible to set up a schedule for each application and employee – for when, by whom, and what for software can be used. For example, once working hours are over – anyone and everyone can launch… SETI@Home. If they really feel the urge.

Further, before Default Deny goes fully operational we need to test its implementation. Based on the testing we get a report on who will have what turned off after introducing each specific rule. You’ll agree that for example it might not be the best idea to deprive a department boss of Civilization :). And of course, this testing is also done automatically.

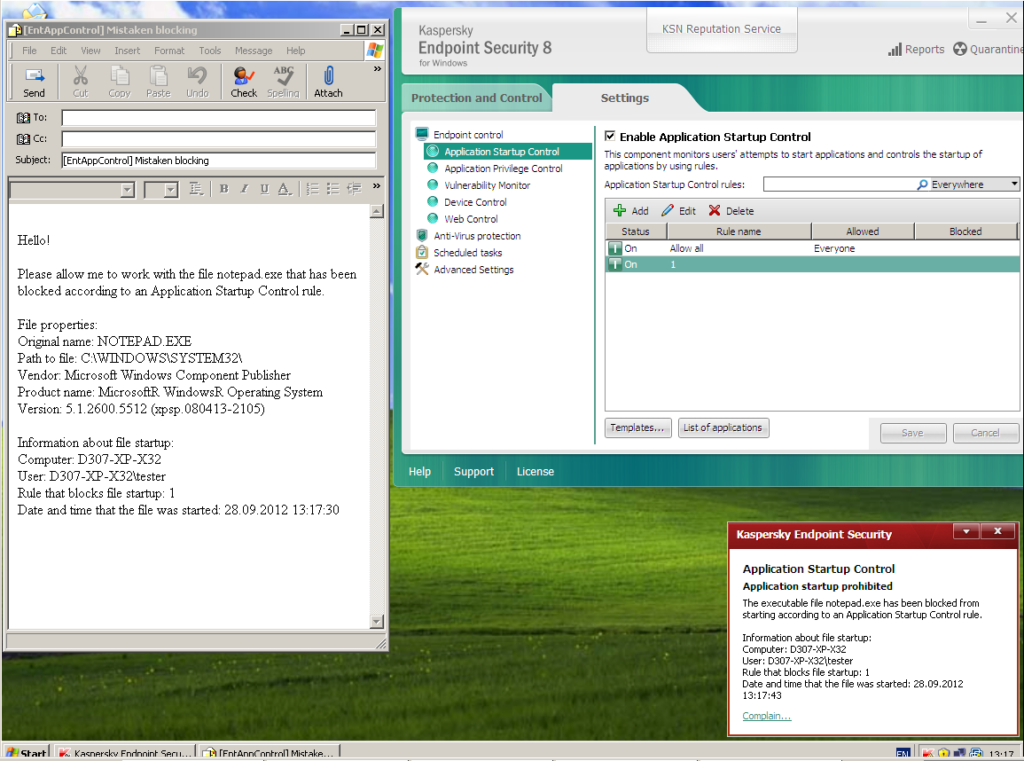

And finally, after implementing Default Deny comes routine monitoring and maintenance. With the help of Trusted Updater technology, trustworthy applications are updated as and when updates appear. IT is given detailed info about what was tried to be launched from the forbidden list – and when and by whom. Besides, users can send a request for being granted permission to install this or that application directly in the KES client.

After reading all the above it would be fair to ask, “Why bother with antivirus at all if you’ve got such strict policies?”

Well, first of all, from the technological point of view – and this may come as a surprise – such a concept as “antivirus” hasn’t existed for ages already, even though today there’s still such a category of software! This (non-existence) is due to the fact that even the “simplest” of home products (I’m talking about us – our Anti-Virus) – are sophisticated and complex pieces of kit, while in corporate solutions the role of classic antivirus makes up, ooh let’s see – probably only 10-15% of overall security. The rest is made up of systems for preventing network attacks, vulnerability searching, proactive protection, centralized management, web traffic and external device control, and much more besides; and let’s not forget the Application Control involved in super-neat implementation of the Default Deny mode. Nevertheless, antivirus as a technology of signature analysis still exists and is still significant. It remains an effective tool for treating active infections and recovering files and remains an indispensable part of multi-level protection.

Returning to Default Deny… It would seem at first that it’s a matter of take it, use it, and enjoy it. But in reality the situation is not quite so straightforward. No matter how much I talk to our sales guys and clients, the public at large is prejudiced against this technology – as with all things new. I often hear things like, “ours is a creative company, and we don’t want to limit employees in their choice of software. We have a freedom-loving culture… and well, haven’t you heard about BYOD?”. Hold it a minute. What are you banging on about?! Permit all that you consider necessary and safe! All it takes is entering into the database new applications or putting a check mark in the right place in the Security Center management console: ten seconds! Most folks act based on prejudices; fair enough. But Default Deny simply protects folks from making mistakes.

So yes, as you’ll figure easily – I strongly recommend Default Deny. It has been tested and praised by West Coast Labs, and Gartner regularly names the approach as the future of IT security. I’m confident the world is on the verge of broad adoption of this technology, and will be able to step up the fight against cyber-evil. Instead of reacting to new threats as they arise, companies will be able to establish their own rules of the game and be a step ahead of the computer underground.

All it takes is a bit of thinking put into managing unwise actions.